Presenters: Elizabeth Fleitz, Betsy Melick, and Sue Edele (Lindenwood University)

With generative AI technology experiencing rapid advancement and gradually making its way into composition classrooms, rhet-comp. researchers, writing instructors, and writing program administrators continue to rethink writing approaches that promote the integration of modules covering critical AI literacy, ethical AI use, and the development of students’ voices. As a prevalent discourse in the field of writing studies, the panelists of “Developing AI Literacy in Composition Courses” examine(d) how a partial course redesign of the composition curriculum offers practical methods for critically introducing AI within writing classrooms.

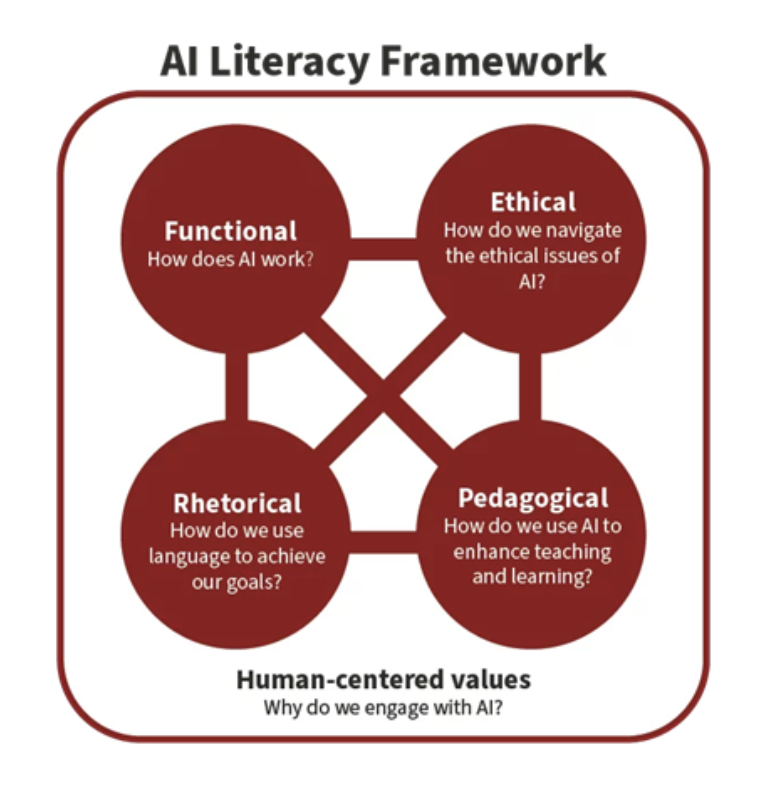

The speakers’ presentation focuses on exploring how remodeling the composition course can significantly impact students’ perspectives on AI literacy as it intersects with writing studies. Elizabeth, Betsy, and Sue held similar perspectives about how AI’s presence within composition classrooms is impacting writing processes, hence their call for pedagogical adjustments in the course curriculum. They propose a critical-ethical stance for working with generative technologies, emphasizing that the usage of AI should be framed around ethical considerations. The panelists adopted the Stanford framework for restructuring the curriculum by integrating the functional, rhetorical, and ethical literacy components. Since the pedagogical section is ideal for writing instructors, the presenters keenly centered the first three during the redesign.

Presentations

To commence, the first presenter, Betsy Melick provided contextual background about their department and the diverse positionalities on AI, noting that three categories existed: 1.) those who are excited about AI technology, 2.) others who displayed limited enthusiasm about AI, and 3.) themselves, the panelists, who sit at the middle of both groups by neither maintaining an averse ideology towards AI nor being deeply engaged with it: hence their urgency to develop a curriculum that centers AI literacy for students.

Grounded on the Stanford AI literacy framework, as shown in Figure 1 below, their study argued for four forms of literacies by which students could be taught to develop critical AI awareness. By building the curriculum around this framework, students understand and differentiate between AI tools, learn their functionalities, and decode ethical issues for informed decision-making. Integrating this framework meant designing targeted assignments to effectively equip students with literacy skills in the four domains. The ‘AI and Your Voice’ activity was a crucial process that empowered students to identify their unique voices through studying diverse human-authored passages. By replicating this activity with generative AI and analyzing outputs, students understand the power of the human voice in writing and how it differs from generated text, and ways AI may inhibit or develop their voice. Ethical literacy was taught by presenting students with an AI course policy through which they explored personal, academic, and professional examples. Each activity enabled students to reflect critically, make decisions about using or abstaining from generative technologies, unpack possible consequences of their choices, and curate personal ethical codes on AI use. As ongoing research, Betsy highlighted that the study was designed using pre and post-test activities, including students’ reflections, to evaluate the 3 literacy domains adopted at the beginning and end of the semester. Looking at students’ attitudes on using AI to take a stance on a topic and draft essays, Betsy stated that students considered both steps inappropriate, which reflected informed awareness.

According to Elizabeth Fleitz, the second speaker, the uncritical adoption of AI has been a longstanding concern in academia. This pertinent issue necessitates the creation of a blueprint for navigating AI that moves beyond giving prompts to AI tools towards understanding the objectives behind using/refusing generative technologies in writing classrooms. Positioning generative technologies as tools that guide users during specific writing stages helped shape students’ ideologies about their cognitive contribution in writing and increased critical and rhetorical awareness for responsible usage. Additionally, administering reading assignments on articles that reflect other college students’ experiences with AI was a useful strategy for helping students ease into and contextualize the conversation. Elizabeth emphasized the significance of teaching the diverse tools that constitute AI technologies, as students failed to recognize Google Search, Grammarly, etc., as extensions of AI software; and of analyzing prompts to determine the output’s success. She shared that reflections from classroom activities create a space for students to think about the whys for engaging with AI, the “human-in-the-loop” system, and the power of the human voice in writing.

Speaker 3, Sue Edele, the writing center director and academic integrity officer at Lindenwood University, segued into the discourse by emphasizing their positionality as a non-AI police. Speaking of writing center experiences, Sue noted that students encountered difficulty keeping up with numerous assignments, were uncertain about when to use AI, or considered some assignments incoherent, hence their adoption of AI. By centering the ethos, pathos, and logos in writing, Sue observed the need to promote credibility and critical thinking during interactions with AI, as this enables students to consciously evaluate information pulled from generative technologies. Also, incorporating activities that underscore prompt writing is beneficial, considering that some students had minimal knowledge about effective prompting and iterations with AI. According to Sue, some of her students resisted generative AI for its negative environmental impact, fear of over-dependence, critical thinking, and privacy concerns. However, to advance the study’s goal while recognizing some students’ worry about the technology, the panelists encourage classroom instruction on AI literacy and critical conversations about skills required to navigate these technologies for ethical use beyond the writing classroom and across students’ literate and professional lives.

Takeaways

The panel presentation gave attendees a lot to think about and offered best practices for future conversations towards ethical engagement with AI in writing classrooms. While the proliferation of generative AI technologies could not be checked, I believe the panel discussions provided instructors and participants with strategies for facilitating and redirecting students’ perspectives on AI discourse. For students, the interactive activities would help promote an in-depth understanding of how over-reliance on AI could diminish critical thinking, inhibit writers’ voices, and suppress learning opportunities. To conclude, the panelists expressed their excitement about the study’s progress and its impact on students, especially with students’ development of personal ethical AI codes and how AI literacy instruction would transcend the composition classroom and shape their interaction with generative technologies in their respective fields. As a reviewer interested in conversations around digital and AI literacy, I look forward to seeing how this study contributes to advancing discussions on AI ethics, critical awareness, and agency.